AI & the Future of System Mapping

(1) A critical reflection on integrating AI-tools into

System Mapping processes.

This article is part of a series which explores risks and opportunities

of the usage of AI in System Mapping processes.

The latest developments in AI are opening up remarkable new possibilities for Systems Thinking and System Mapping. It is fascinating to observe how quickly and effortlessly complex content can be summarised, analysed, and visualised.

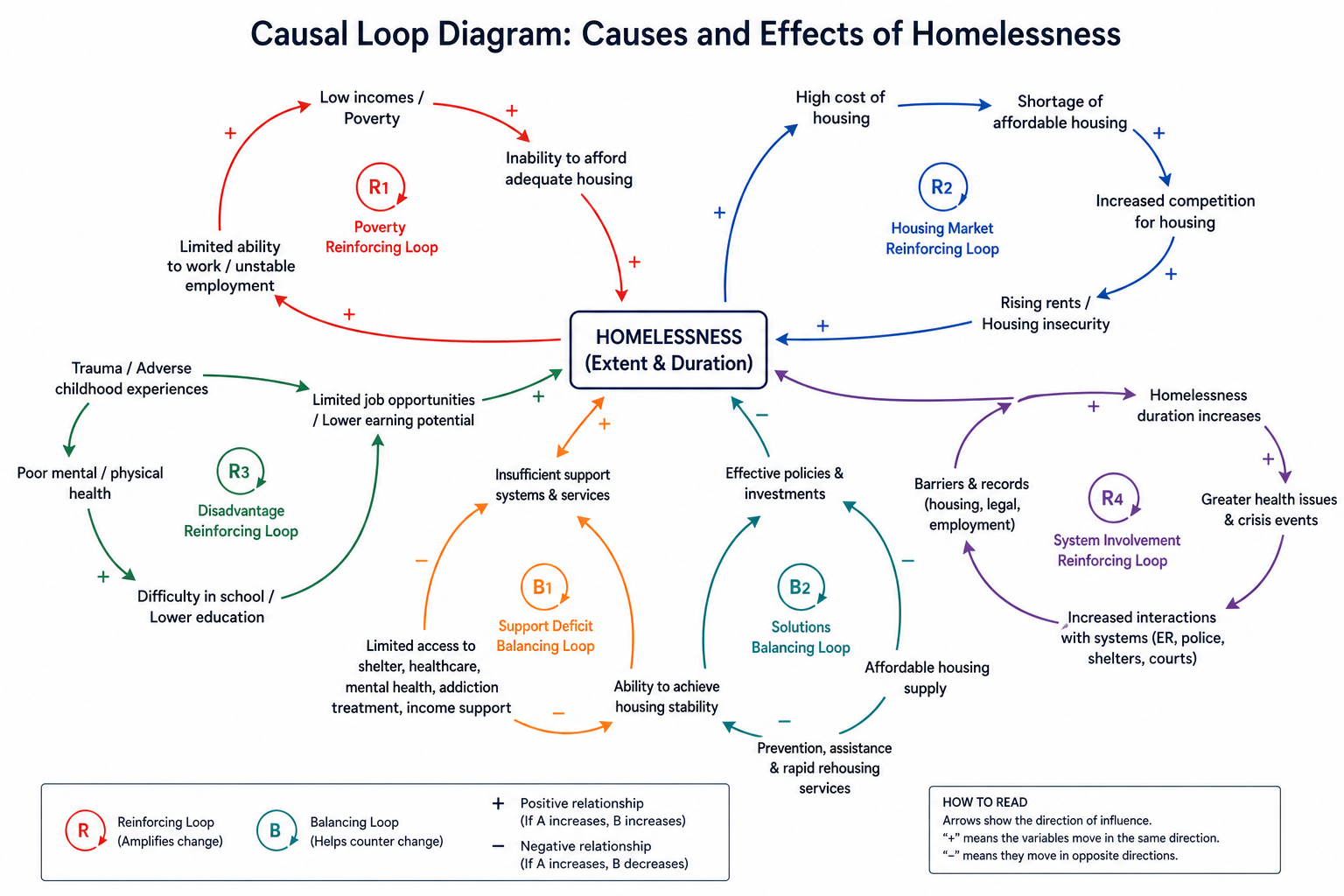

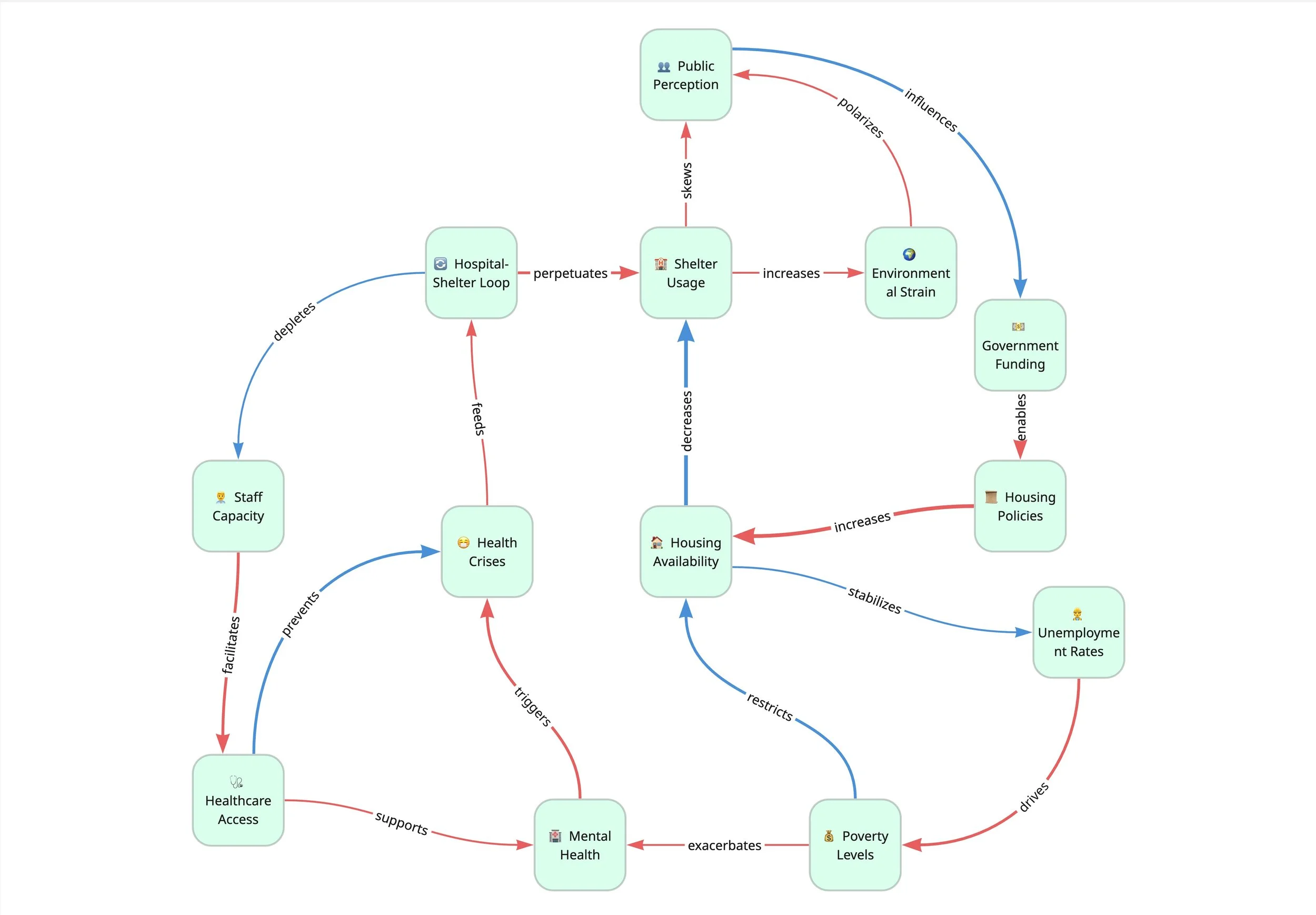

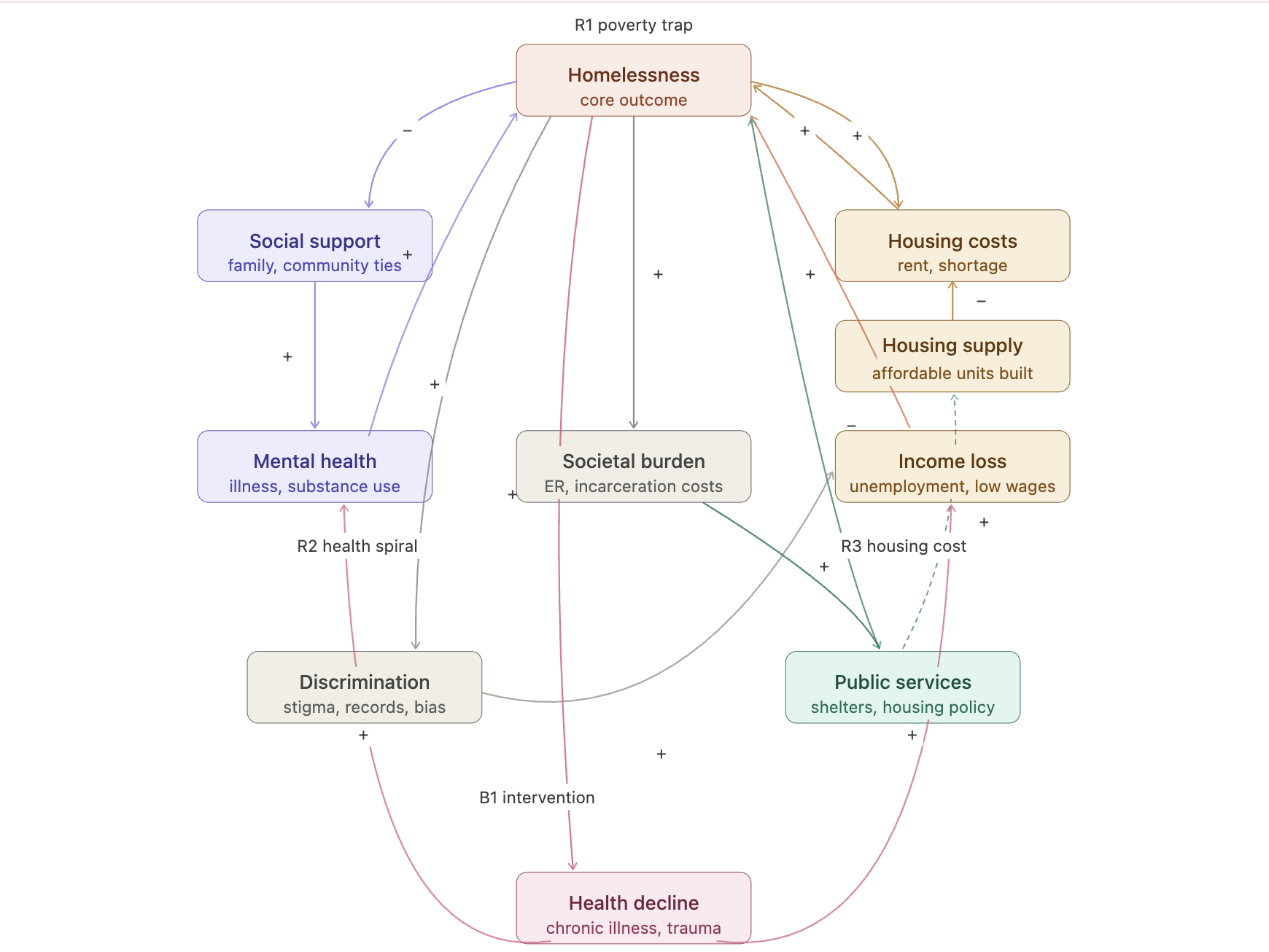

The following System Maps show the causes and effects of homelessness, created in ChatGPT (1), Miro-Plugin “Systemic” (2) and Claude (3). With a very basic prompt used (“Create a causal loop diagram exploring the causes and effects of homelessness.”), the results we get in seconds, are not perfect, but increasingly valuable to work with.

Yet precisely because these possibilities are unfolding so rapidly, at the System Mapping Academy a question is increasingly occupying our thoughts:

Can AI tools actually hinder Systems Thinking by presenting us with ready-made syntheses, without us having gone through the actual thinking process at all?

The dilemma of frictionless answers

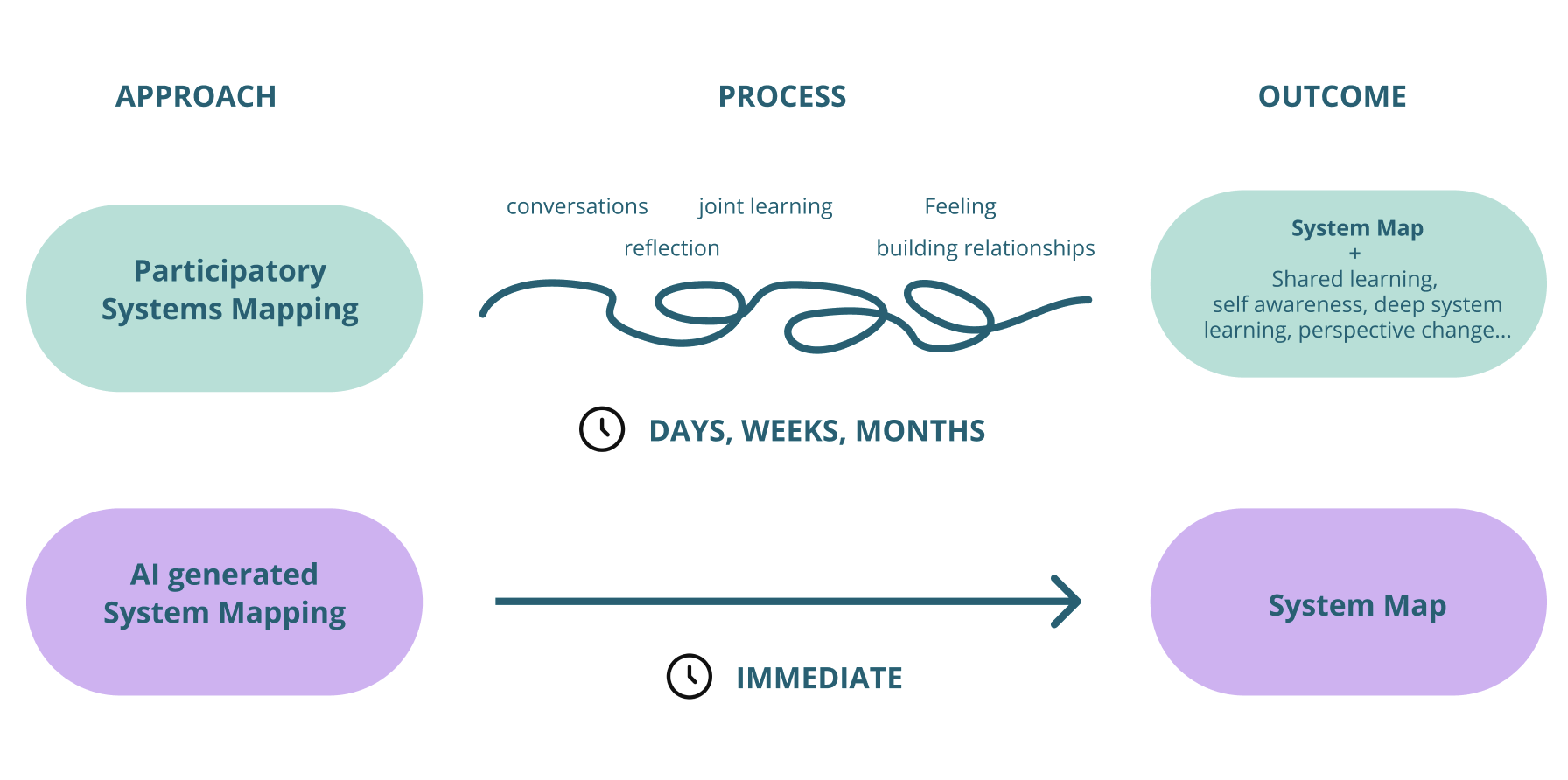

Put in extreme terms, one could argue that the direct AI route, with its fast, frictionless, and unreflective path to a finished System Map, is the very antithesis of Systems Thinking.

Over the past months, we conducted various experiments in an attempt to get a clearer sense of where the real benefits and risks lie. This article is the first in a series sharing what we learned.

Throughout our practice over the past years, one insight has stood out above all others: System Mapping is not primarily about the end product, the finished map, the visualisation, the synthesis. It is about the process itself: confronting complexity, immersing in it, tolerating the overwhelm, and learning along the way.

When we use AI tools to leap directly to a synthesis or a finished System Map, these intermediate steps fall away, or are at least significantly shortened. The personal learning within the process, the struggle with uncertainty, the shifts in perspective: all of this no longer happens inside us, but inside the model.

“The danger is not just that one person learns less, but that no shared learning happens at all between the people who need to act together within the system.”

And there is a deeper risk here that goes beyond individual learning. Systems work is almost always a collective endeavour. Ideally, it involves multiple actors, each carrying their own understanding of how the system operates. When AI creates a shortcut from problem to supposedly finished analysis in seconds, it skips over precisely the conversations and discussions through which shared meaning is built. The danger is not just that one person learns less, but that no shared learning happens at all between the people who need to act together within the system.

Without friction in the encounter with complexity, there is hardly deeper learning.

AI’s fast track to results, neglects the learnings made in the process

Hidden biases can reinforce current power structures

AI models trained predominantly on documented, mainstream, and institutionally produced knowledge will tend to populate a system map with familiar actors, dominant narratives, and well-rehearsed framings, leaving the informal, the marginalised, and the emergent in the shadows. Similarly, when large language models are used to support system analysis, drafting problem statements, synthesising literature, generating recommendations, the model's training corpus shapes what gets surfaced.

Western, English-language, and institutional perspectives are heavily overrepresented, while community knowledge, indigenous systems thinking, non-academic expertise, and Global South contexts are systematically underrepresented.

Crucially, the AI does not flag this imbalance. These are just two examples of a much broader issue of underlying biases in AI models.

We must critically examine which sources are feeding the analysis, whose knowledge is represented, and whose is absent, and remain sceptical of outputs that appear comprehensive but may simply reflect the loudest and most documented voices.

The “Human in the (Feedback) Loop”

That said, AI is not going away, and its influence on how we work will only deepen. The real challenge is not whether to engage with it, but how to do so wisely: leveraging its remarkable capabilities while remaining intentional about when, how, and for what purpose we bring it into the room. Used thoughtfully and with clear eyes about its limitations, the potential benefits for system mapping practice are significant.

If AI tools are used to map systems, identify leverage points, or generate policy recommendations without sustained human interrogation, we risk outsourcing precisely the critical capacity that makes system analysis valuable.

Like in every other application of AI technology humans should always retain meaningful oversight, judgment, and the ability to intervene at critical points in the process.

In system analysis, this is not just a safeguard against error, it is a condition for good practice. System analysis is fundamentally interpretive work. It requires asking:

Whose perspective is missing?

What has been left outside the system boundary?

What assumptions are driving this framing?

These are not questions an AI tool can answer on its own, because they require normative judgment, a sense of what matters, to whom, and why.

Guideline for AI-enhanced System Mapping

Through our experiments, a number of insights emerged that we have since translated into draft guidelines for our daily practice.

Know your data sources

Prioritise data sources that you can comprehend, or at minimum trace, knowing who was involved in their creation, what data points were included, and what was left out. Favour trusted, transparent sources over convenient ones. Web-based AI applications bring additional opacity: they often draw on sources that are difficult to audit, and their outputs can be hard to attribute or verify. Use open web search only when no more traceable alternative exists, and always treat their outputs with heightened critical scrutiny.Treat AI Output as a Starting Point, not an Answer

Everything AI generates, a draft map, a stakeholder perspective, a pattern observation, should be treated as a provocation to react to, not a conclusion to accept.Stay Curious About What Is Missing

AI is trained on what has been documented and published, which means it systematically underrepresents informal knowledge, marginalized voices, and lived experience. In systems work, what is absent from the map is often as important as what is visible. Human judgment must actively compensate for this blind spot.Slow down at the Moments That Matter

AI can create a dangerous illusion of progress, a draft map in minutes can feel like understanding has been reached when it hasn't. The most important moments in system mapping require human time and cannot be accelerated. Know when to put the tool down.Name When You Are Using It and Why

Transparency about AI involvement matters, both within the team and with wider stakeholders. Being explicit about which parts of the process involved AI, and what role it played, builds trust and keeps the group honest about where human thinking ends, and machine output begins.

The thoughts in this article are deliberately framed as first impulses. We make no claim to completeness and are convinced that our view on this will continue to evolve through further experiments and conversations.

In the following blog articles, we want to further explore the different roles AI can take within the mapping process, share our learnings with tools and approaches and reflect on the potential AI enhanced mapping processes can bring.